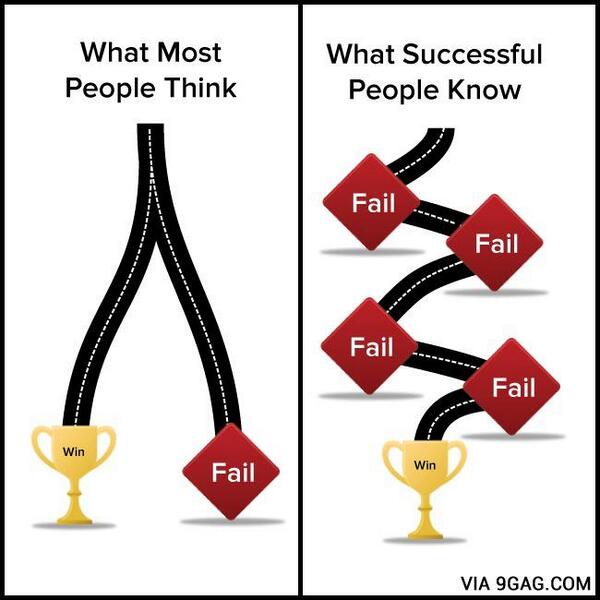

Learning from Failure

-

Institutional ‘Forgetting’ and The Failure of Corporate Memory

Corporate amnesia or ‘institutional forgetting’ -is a phenomenon where organisations lose valuable knowledge, experience, and insights over time. This can be a gradual process or a sudden occurrence, and it can have significant negative impacts on an organisation’s performance, decision-making,… Continue reading

-

The Difference Between Good And Bad Organisations

Both good and bad organisations make mistakes, but the good ones are better at learning from them. Continue reading

-

How To Make Decisions In A World Of Uncertainty When Not Knowing Or Being Sure Of Anything Is The Only Answer We Have (TLDR: Get comfortable with failure..)

In a high stakes environment , where people will die whatever you do next, nobody wants to talk about failure. For companies large and small, to make progress in complex situations means re-evaluating our relationship with the F Word. Continue reading

-

What Coronavirus Tells Us About Risk

As I sit down to write this post I’ve just received an email from a weekly design blog I subscribe to. This edition is titled , alarmingly, ‘Pandemic Prep’. It begins “We are interrupting our regularly scheduled newsletter format and… Continue reading

-

Is the social sector really getting better at learning from failure?

Guest post with Shirley Ayres , Chris Bolton and Roxanne Persaud Innovation in the digital sphere can be complex and risky and there are not sufficient opportunities to share learning from failure. One year on from the Practical Strategies For Learning… Continue reading